PNSQC Pulse - April 22, 2026

CFP Last Call | April Meetup Recap |

Call for Papers

You don’t need a finished paper to speak at PNSQC. You just need an idea

Submission deadline: May 4, 2025

Have you solved a tricky quality problem at work? Navigated a painful failure and come out with hard-won lessons? For more than 40 years, PNSQC has been the place where practitioners share those experiences. We’re accepting paper proposals for this year’s conference, and we’d love to hear from you.

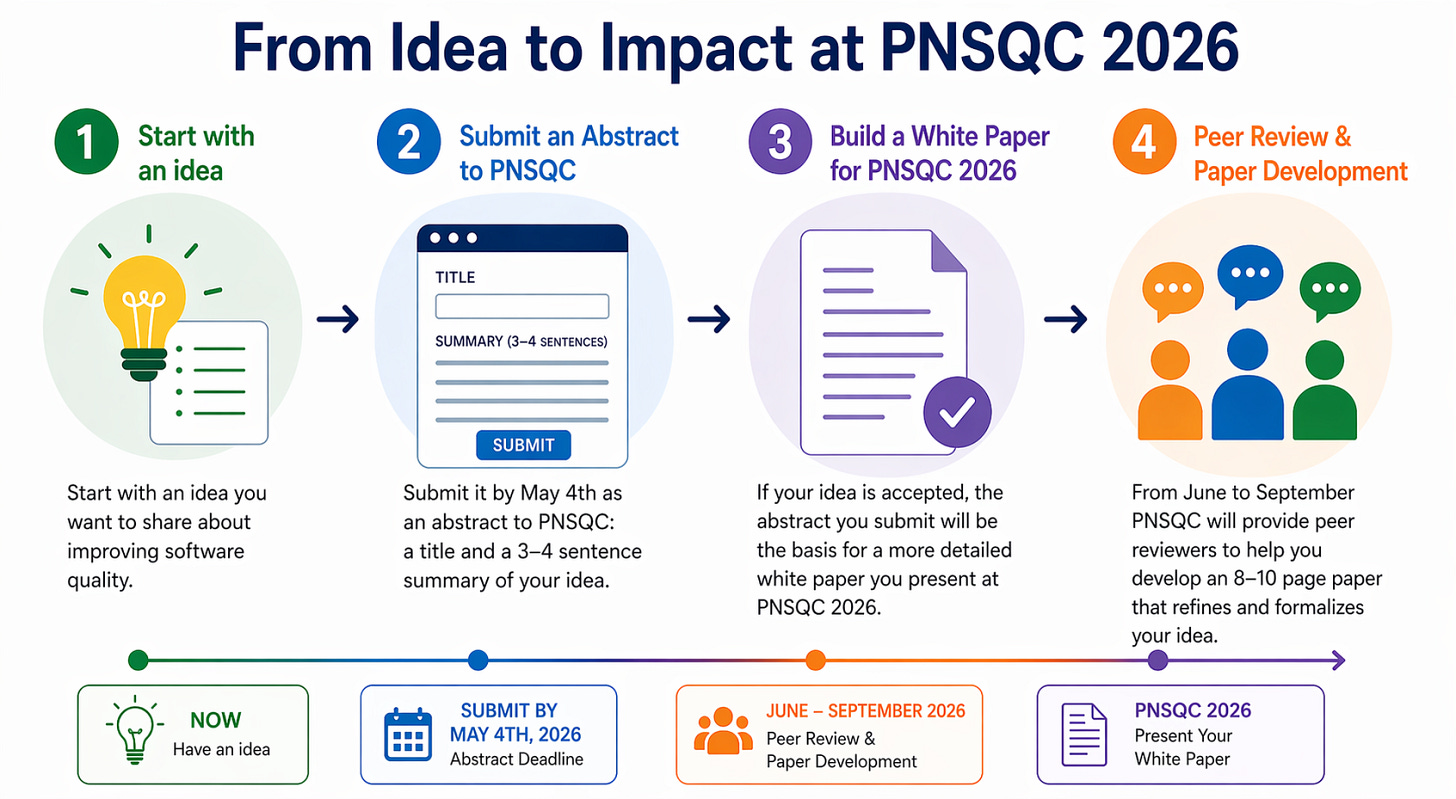

How it works

Getting started is low-effort: submit a title and 3–4 sentences describing your idea. If your proposal is accepted, PNSQC will work with you to develop it into a short whitepaper. Accepted papers are peer-reviewed and published in our annual proceedings — a lasting contribution to the software quality profession.

Unlike many conferences, PNSQC papers are peer-reviewed and published in our annual proceedings, contributing to the body of knowledge for the software quality profession.

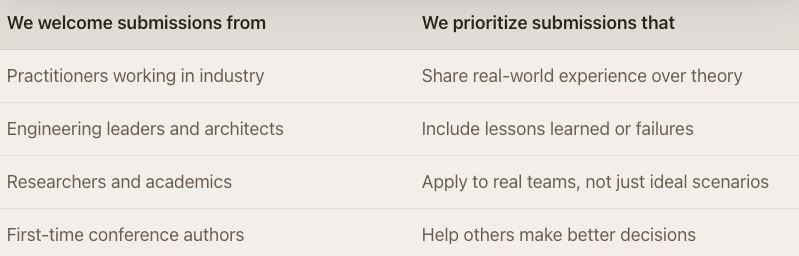

Who should submit

If you’ve never presented at a conference before, this is a great place to start. PNSQC actively encourages first-time authors, and the paper development process means you won’t be doing it alone.

Meetup recap — April 2025

AI-augmented manual testing: how PLUS QA built MaxAI without disrupting their team

Presented by Ryan Wilder (VP) and Gianni Zamora (Lead Dev) at PLUS QA, Southeast Portland

At our April meetup, Ryan Wilder (VP of Operations) and Gianni Zamora (Software Engineer) from PLUS QA walked us through MaxAI — an in-house tool they built to amplify manual testing without disrupting the way their team already works.

The core philosophy: don’t add another tool to the pile. PLUS QA works with many external clients simultaneously, each with different systems and timelines, sometimes starting testing on a Monday with a Friday go-live. Their answer was to embed AI directly into their existing Test Platform, so testers “come home to PLUS QA” to a familiar, consistent workflow no matter the chaos of the client project.

MaxAI handles the work that doesn’t require human expertise, checking broken links, page titles, image loading, DOM-level issues, freeing testers to focus on the nuanced, experiential testing that AI can’t do. Bugs are filed as drafts first, invisible to clients until a human expert reviews and approves them. As Ryan put it: “Max is our sous chef. We still need a MasterChef to make sure the plate is ready before it goes to the client.”

The tool uses domain-restricted crawling to avoid going off the rails on dynamic pages, GPT-4o for image interpretation, and passes screenshot + selector + DOM snippet as context to improve accuracy. Test cases are exported in a fully editable state, a rough draft, not a final product, so PMs can refine them just as they would anything written manually.

The Q&A surfaced some rich territory: domain knowledge and HIPAA-style rules, localization testing, testing for AI agents as end users, and how to handle the fact that correctness in QA is often subjective. On that last point, Ryan’s take was clear, human experts make the final call, because at least you can ask them why.

On the roadmap: deeper accessibility testing (Ryan was candid that the experiential side, does it feel “scratchy” or “nice” to use a screen reader on this page, is far beyond what AI can assess today), desktop application support via Playwright, and potentially continuous monitoring for clients.

Missed it? The recording will be available on our YouTube channel soon.